Professional Data Engineer

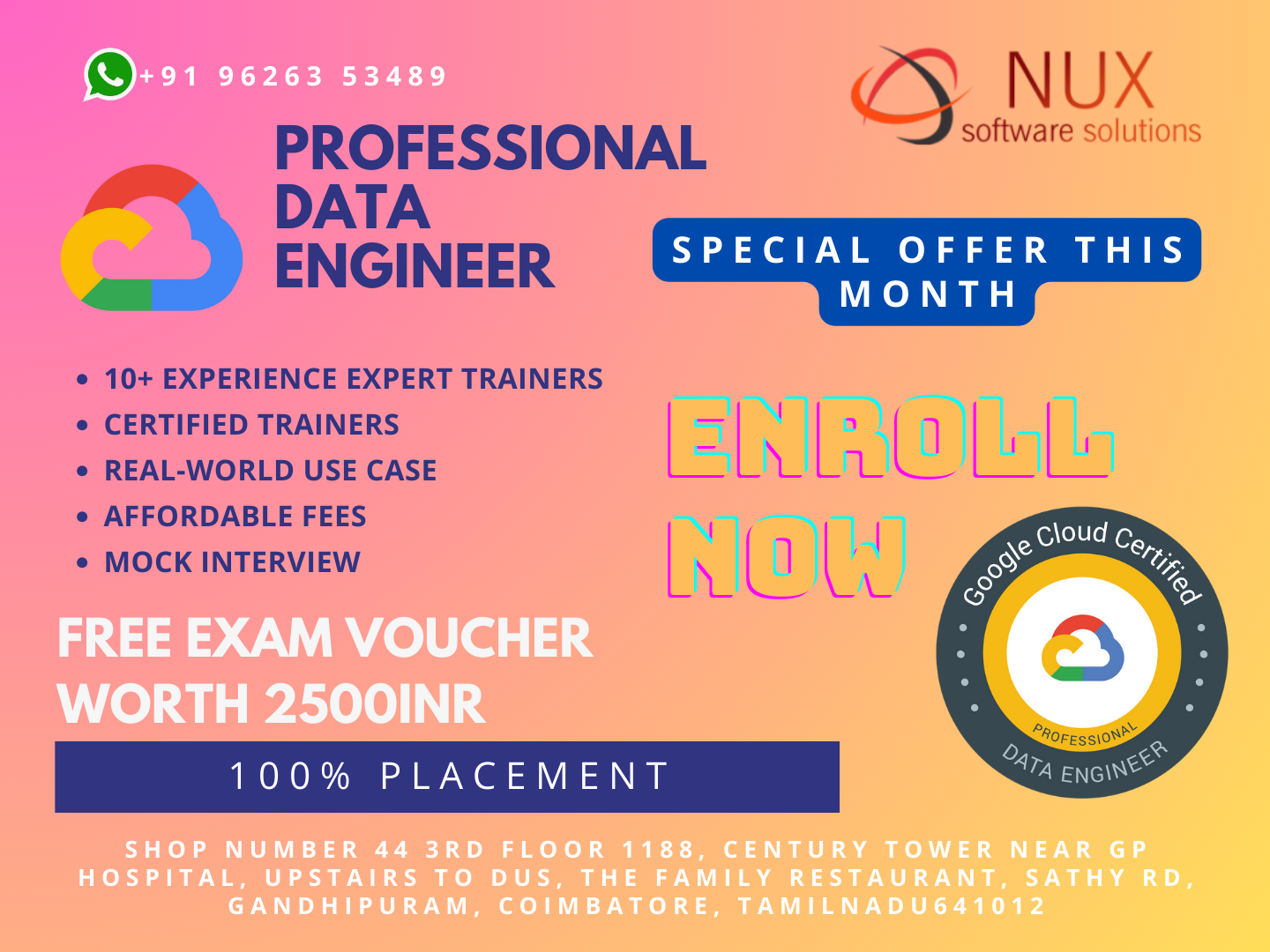

Best Professional Data Engineer Training Institute in Coimbatore.

Nux software Training & Certification Solutions in Coimbatore proudly offers the best Professional Data Engineer training courses. Our advanced programs are tailored to provide superior performance and hands-on experience. Industry-expert trainers bring a wealth of skills and experience in their specialized areas.

The training center provides an exceptional environment suitable for professionals, individuals, and corporate entities, offering specialized courses in live projects and industrial training. Our advanced lab infrastructure is well-managed, providing 24/7 access from any location. International expert trainers, equipped with excellent knowledge and real-time industry experience, contribute to a dynamic learning environment.

At Nux Software, our training programs integrate innovative learning methods and delivery models. We prioritize understanding your requirements, ensuring a 100% growth trajectory for your career. Our cost-effective training programs are designed with flexibility to meet the unique needs of our trainees. Elevate your skills with Nux Software IT Training & Certification Solutions for a rewarding and flexible learning experience.

The Google Cloud Professional Data Engineer certification has earned its place among the top-paying IT certifications, as recognized by Global Knowledge. This program equips you with the essential skills to propel your career as a professional cloud architect. It also recommends training that supports your preparation for the prestigious Google Cloud Professional Cloud Architect certification, a widely acknowledged industry credential. Elevate your expertise and open doors to new opportunities in the dynamic field of cloud architecture with this esteemed certification.

Seize the opportunity to deploy solution elements, encompassing infrastructure components like networks, systems, and application services. Our program provides a hands-on learning experience through various Qwiklabs projects, allowing you to gain real-world expertise in a dynamic and practical environment. Engage with the intricacies of deploying and managing key components, enhancing your skills, and preparing you for the challenges of the ever-evolving technology landscape.

Upon successful completion of this program, you will earn a certificate of completion to share with your professional network and potential employers.

If you’re aspiring to become Google Cloud certified, showcasing your expertise in cloud architecture and Google Cloud Platform, as well as your ability to design, develop, and manage solutions for business objectives, the first step is to register for and successfully pass the official Google Cloud certification exam. For detailed information on registration and additional resources to bolster your preparation, look no further than Nux Software Training & Certification Solutions. We provide the guidance and support you need to embark on your certification journey with confidence.

Course Syllabus

Professional Data Engineer Syllabus

Designing data processing systems

- Mapping storage systems to business requirements

- Data modeling

- Trade-offs involving latency, throughput, transactions

- Distributed systems

- Schema design

- Data publishing and visualization (e.g., BigQuery)

- Batch and streaming data (e.g., Dataflow, Dataproc, Apache Beam, Apache Spark and Hadoop ecosystem, Pub/Sub, Apache Kafka)

- Online (interactive) vs. batch predictions

- Job automation and orchestration (e.g., Cloud Composer)

- Choice of infrastructure

- System availability and fault tolerance

- Use of distributed systems

- Capacity planning

- Hybrid cloud and edge computing

- Architecture options (e.g., message brokers, message queues, middleware, service-oriented architecture, serverless functions)

- At least once, in-order, and exactly once, etc., event processing

- Awareness of current state and how to migrate a design to a future state

- Migrating from on-premises to cloud (Data Transfer Service, Transfer Appliance, Cloud Networking)

- Validating a migration

Building and operationalizing data processing systems

- Effective use of managed services (Cloud Bigtable, Cloud Spanner, Cloud SQL, BigQuery, Cloud Storage, Datastore, Memorystore)

- Storage costs and performance

- Life cycle management of data

- Data cleansing

- Batch and streaming

- Transformation

- Data acquisition and import

- Integrating with new data sources

- Provisioning resources

- Monitoring pipelines

- Adjusting pipelines

- Testing and quality control

Operationalizing machine learning models

- ML APIs (e.g., Vision API, Speech API)

- Customizing ML APIs (e.g., AutoML Vision, Auto ML text)

- Conversational experiences (e.g., Dialogflow)

- Ingesting appropriate data

- Retraining of machine learning models (AI Platform Prediction and Training, BigQuery ML, Kubeflow, Spark ML)

- Continuous evaluation

- Distributed vs. single machine

- Use of edge compute

- Hardware accelerators (e.g., GPU, TPU)

- Machine learning terminology (e.g., features, labels, models, regression, classification, recommendation, supervised and unsupervised learning, evaluation metrics)

- Impact of dependencies of machine learning models

- Common sources of error (e.g., assumptions about data)

Ensuring solution quality

- Identity and access management (e.g., Cloud IAM)

- Data security (encryption, key management)

- Ensuring privacy (e.g., Data Loss Prevention API)

- Legal compliance (e.g., Health Insurance Portability and Accountability Act (HIPAA), Children's Online Privacy Protection Act (COPPA), FedRAMP, General Data Protection Regulation (GDPR))

- Building and running test suites

- Pipeline monitoring (e.g., Cloud Monitoring)

- Assessing, troubleshooting, and improving data representations and data processing infrastructure

- Resizing and autoscaling resources

- Performing data preparation and quality control (e.g., Dataprep)

- Verification and monitoring

- Planning, executing, and stress testing data recovery (fault tolerance, rerunning failed jobs, performing retrospective re-analysis)

- Choosing between ACID, idempotent, eventually consistent requirements

- Mapping to current and future business requirements

- Designing for data and application portability (e.g., multicloud, data residency requirements)

- Data staging, cataloging, and discovery